Today I spoke at F8 about how the Applied Machine Learning (AML) team at Facebook is working with dozens of product teams across the company to give people communication superpowers through AI.

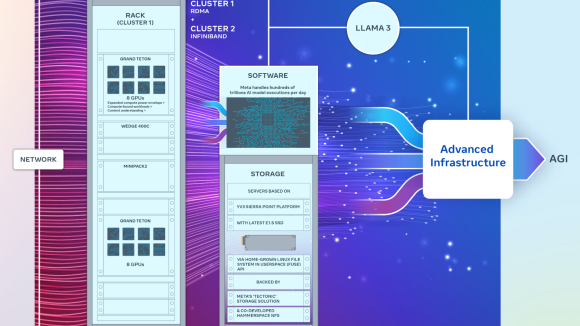

To do this, we built an AI backbone that powers much of the Facebook experience and is used actively by more than 25 percent of all engineers across the company. Powered by a massive 40 PFLOPS GPU cluster that teams are using to train really large models with billions of parameters on huge data sets of trillions of examples, teams across the company are running 50x more AI experiments per day than a year ago, which means that research is going into production faster than ever.

Here are a few of the ways that AI powers various Facebook experiences, with some demos that give a glimpse into what’s possible by continuing to research and advance the state of the art in AI.

Translation

Today, 50 percent of the Facebook community does not speak English, and most people don’t speak each other’s languages. To remove these communication barriers, the Applied Machine Learning team built an AI-based automatic translation system that helps 800 million people every month see translated posts in their News Feed. Traditional off-the-shelf solutions wouldn’t work well here because they tend to be trained on a general corpus like appliance manuals, which is different than the language used on our platform. Facebook is all about human-to-human language: It’s alive, people use new expressions all the time, they don’t spell out their words, there are regional differences, and there are emojis.

Photo image search

When thinking back on your favorite memories, it can be hard to remember exactly when something took place and who took the photo to capture the moment. Using Facebook’s automatic image classifiers, you can imagine a scenario where someone could search through all the photos that his or her friends have shared to look for a particular one based on the image content instead of relying on tags or surrounding text. This is pretty cool, but we can take it a step further.

Talking pictures

We are currently building systems that can understand images at the individual pixel level rather than just classifying the entire image. This is called image segmentation, and it allows us to recognize individual objects in the image as well as their relation. Using image segmentation we will be able to build more immersive experiences for the visually impaired with “talking images” you can read with your fingertips, as well as more powerful ways to search images. In one case here, we have the ability to search for “a photo of us five on skis on the snow, with a lake in the background and trees on both sides.”

Real-time video classification

In order to build the tools to organize content you want to see, we also need to be able to classify live videos in real time. What you’re seeing here are the same computer vision techniques to watch videos and, in real time, understand and classify what’s in them without using tags or surrounding content. We published our findings on training video models that do convolutions both in space and time to get actions, objects, and scenes for a given video.

AI is central to today’s Facebook experience, and, with our research pushing the state of the art, we’re just getting started on this journey. I’m excited to see where it takes us next.