Site Reliability Engineering (SRE) at Facebook is always under pressure to keep the site and all the moving pieces behind the scenes running while still delivering an excellent user experience. The recent launch of usernames to our 200 million active users on a single night at the exact same time was unique in its preparation and potential for trouble.

Our product teams had evaluated the various options for enabling people to register for their name and decided upon a single first come, first serve registration window for every user. Although this was the most fair, it was difficult operationally because predicting the number of users that would show up at that time to claim a name was impossible. The Memcached infrastructure that runs behind every page on the site was partitioned and expanded to cope with users checking the availability of names. It also supported our list of blocked names like Pizza, RonPaul and Beer. One terabyte of in-memory cache was dedicated exclusively for the username launch. Most pages on Facebook are comprised of multiple AJAX calls and other JavaScript/CSS resources, but the registration page for usernames was stripped down as much as possible to incur very little additional load on our web servers. Users were directed straight to this page for their registration, bypassing the more intensive parts of Facebook.

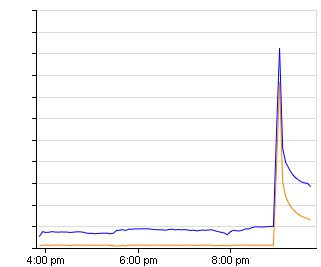

We intentionally chose to launch the feature at a time of low site activity (Friday at 9PM Pacific) to help with the expected extra demand, and assembled people from many groups within Facebook to be in our “War Room” during the launch. The software engineers that wrote the code, network, system, and security engineers, SRE’s, product managers, and anybody else that wanted to help out assembled for few hours eating Chinese food, drinking beer, and enabling usernames on Facebook. The launch of usernames was unprecedented because of its potential to affect the experience and stability of Facebook in a very real way. About a month prior to the big night we began planning for any possible contingency by performing background load testing with normal traffic. Additionally, we developed a comprehensive set of levers and knobs that would enable us to alter site functionality to cope with the extra demand.

- “Dark Launching”: During the two weeks prior to launch we began what we call a “dark launch” of all the functionality on the backend. Essentially a subset of user queries are routed to help us test, by making “silent” queries to the code that, on launch night, will have to absorb the traffic. This exposes pain points and areas of our infrastructure that needs attention prior to the actual launch. Increasing the demand on one subsystem may generate more logs than anticipated and overwhelm analysis processes, or unexpected network bottlenecks may appear. “Dark launching” allows us to stress test parts of Facebook before it would be apparent, while still simulating the full effect of launching the code to real users.

- Levers and the “Nuclear Option”: We couldn’t be sure of the exact level of traffic the launch would generate so we put together contingency plans to decrease the load on various parts of the site. This gave us overhead in core functionality at the expense of less essential services. Facebook comprises hundreds of interlocking systems, although to users it’s presented as a simple web page. Throttling back the behavior of certain facets allows us to lighten the demand on our infrastructure without compromising major site functionality. The time required to make most of these changes is usually less than a minute.

Some of the levers at our disposal were:

- Altering Facebook Chat to be less feature-rich by turning off typing notifications, new item notifications, and slowing down how often clients refresh their buddy lists.

- Decreasing the default number of stories on the Home and Profile pages.

- Switching off entire parts of the site like the bottom chat bar, the highlights to the right of news feed, “People You May Know”, and commenting on/liking of stories.

- “Nuclear Options”: In the event that Facebook became overwhelmed with traffic and suffered performance problems as a result we also prepared for what we called ‘Nuclear Options’ such as cutting off nearly all the functionality on the Profile page, turning off Facebook Chat, and completely disabling the Home page. Any of these options were an absolute last resort to keep the site functional as they would have resulted in a severely degraded user experience.

All this preparation paid off on launch night. In the first three minutes over 200,000 people registered names, with over 1 million allocated in the first hour, and none of our “nuclear option” levers had to be used at any point. Through the entire launch we had no issues handling the additional load.

Our next big event is coming up and we’re already stressing the infrastructure to ensure a smooth launch. If the challenges of supporting the infrastructure behind one of the largest sites on the Internet makes you excited, check out our open SRE, Engineering, and other Operations positions at facebook.com/careers.