Text is a prevalent form of communication on Facebook. Understanding the various ways text is used on Facebook can help us improve people's experiences with our products, whether we're surfacing more of the content that people want to see or filtering out undesirable content like spam.

With this goal in mind, we built DeepText, a deep learning-based text understanding engine that can understand with near-human accuracy the textual content of several thousands posts per second, spanning more than 20 languages.

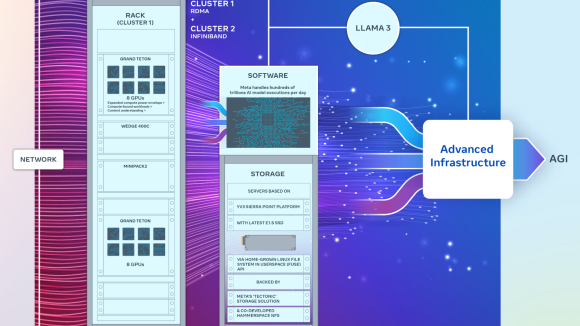

DeepText leverages several deep neural network architectures, including convolutional and recurrent neural nets, and can perform word-level and character-level based learning. We use FbLearner Flow and Torch for model training. Trained models are served with a click of a button through the FBLearner Predictor platform, which provides a scalable and reliable model distribution infrastructure. Facebook engineers can easily build new DeepText models through the self-serve architecture that DeepText provides.

Why deep learning

Text understanding includes multiple tasks, such as general classification to determine what a post is about — basketball, for example — and recognition of entities, like the names of players, stats from a game, and other meaningful information. But to get closer to how humans understand text, we need to teach the computer to understand things like slang and word-sense disambiguation. As an example, if someone says, “I like blackberry,” does that mean the fruit or the device?

Text understanding on Facebook requires solving tricky scaling and language challenges where traditional NLP techniques are not effective. Using deep learning, we are able to understand text better across multiple languages and use labeled data much more efficiently than traditional NLP techniques. DeepText has built on and extended ideas in deep learning that were originally developed in papers by Ronan Collobert and Yann LeCun from Facebook AI Research.

Understanding more languages faster

The community on Facebook is truly global, so it's important for DeepText to understand as many languages as possible. Traditional NLP techniques require extensive preprocessing logic built on intricate engineering and language knowledge. There are also variations within each language, as people use slang and different spellings to communicate the same idea. Using deep learning, we can reduce the reliance on language-dependent knowledge, as the system can learn from text with no or little preprocessing. This helps us span multiple languages quickly, with minimal engineering effort.

Deeper understanding

In traditional NLP approaches, words are converted into a format that a computer algorithm can learn. The word “brother” might be assigned an integer ID such as 4598, while the word “bro” becomes another integer, like 986665. This representation requires each word to be seen with exact spellings in the training data to be understood.

With deep learning, we can instead use “word embeddings,” a mathematical concept that preserves the semantic relationship among words. So, when calculated properly, we can see that the word embeddings of “brother” and “bro” are close in space. This type of representation allows us to capture the deeper semantic meaning of words.

Using word embeddings, we can also understand the same semantics across multiple languages, despite differences in the surface form. As an example, for English and Spanish, “happy birthday” and “feliz cumpleaños” should be very close to each other in the common embedding space. By mapping words and phrases into a common embedding space, DeepText is capable of building models that are language-agnostic.

Labeled data scarcity

Written language, despite the variations mentioned above, has a lot of structure that can be extracted from unlabeled text using unsupervised learning and captured in embeddings. Deep learning offers a good framework to leverage these embeddings and refine them further using small labeled data sets. This is a significant advantage over traditional methods, which often require large amounts of human-labeled data that are inefficient to generate and difficult to adapt to new tasks. In many cases, this combination of unsupervised learning and supervised learning significantly improves performance, as it compensates for the scarcity of labeled data sets.

Exploring DeepText on Facebook

DeepText is already being tested on some Facebook experiences. In the case of Messenger, for example, DeepText is used by the AML Conversation Understanding team to get a better understanding of when someone might want to go somewhere. It's used for intent detection, which helps realize that a person is not looking for a taxi when he or she says something like, “I just came out of the taxi,” as opposed to “I need a ride.”

We're also beginning to use high-accuracy, multi-language DeepText models to help people find the right tools for their purpose. For example, someone could write a post that says, “I would like to sell my old bike for $200, anyone interested?” DeepText would be able to detect that the post is about selling something, extract the meaningful information such as the object being sold and its price, and prompt the seller to use existing tools that make these transactions easier through Facebook.

DeepText has the potential to further improve Facebook experiences by understanding posts better to extract intent, sentiment, and entities (e.g., people, places, events), using mixed content signals like text and images, and automating the removal of objectionable content like spam. Many celebrities and public figures use Facebook to start conversations with the public. These conversations often draw hundreds or even thousands of comments. Finding the most relevant comments in multiple languages while maintaining comment quality is currently a challenge. One additional challenge that DeepText may be able to address is surfacing the most relevant or high-quality comments.

Next steps

We are continuing to advance DeepText technology and its applications in collaboration with the Facebook AI Research group. Here are some examples.

Better understanding people's interests

Part of personalizing people's experiences on Facebook is recommending content that is relevant to their interests. In order to do this, we must be able to map any given text to a particular topic, which requires massive amounts of labeled data.

While such data sets are hard to produce manually, we are testing the ability to generate large data sets with semi-supervised labels using public Facebook pages. It's reasonable to assume that the posts on these pages will represent a dedicated topic — for example, posts on the Steelers page will contain text about the Steelers football team. Using this content, we train a general interest classifier we call PageSpace, which uses DeepText as its underlying technology. In turn, this could further improve the text understanding system across other Facebook experiences.

Joint understanding of textual and visual content

Often people post images or videos and also describe them using some related text. In many of those cases, understanding intent requires understanding both textual and visual content together. As an example, a friend may post a photo of his or her new baby with the text “Day 25.” The combination of the image and text makes it clear that the intent here is to share family news. We are working with Facebook's visual content understanding teams to build new deep learning architectures that learn intent jointly from textual and visual inputs.

New deep neural network architectures

We continue to develop and investigate new deep neural network architectures. Bidirectional recurrent neural nets (BRNNs) show promising results, as they aim to capture both contextual dependencies between words through recurrence and position-invariant semantics through convolution. We have observed that BRNNs achieve lower error rates than regular convolutional or recurrent neural nets for classification; in some cases the error rates are as low as 20 percent.

While applying deep learning techniques to text understanding will continue to enhance Facebook products and experiences, the reverse is also true. The unstructured data on Facebook presents a unique opportunity for text understanding systems to learn automatically on language as it is naturally used by people across multiple languages, which will further advance the state of the art in natural language processing.